0. 前言

Android视频录制一直是个大问题,之前做一款短视频应用,视频录制采用ffmpeg,虽然做了很多优化,但是画面质量和帧率难以达到要求,至少达不到IOS的水准。所以痛下决心研究Android平台的硬编码方案。硬编码

所谓的硬编码就是采用DSP对视频帧进行编码,相对于软编码来说,硬编码的编码效率天差地别。更高的编码效率就意味着在相同帧率下能够获得更高的分辨率,更佳的画面质量。1. Android视频录制一般流程

1.1 一般流程

(1)视频数据采集从Camera中获取视频数据

(2)视频帧处理

添加视频滤镜,人脸识别等

可能还需要进行RGB转YUV

(3)编码

FFmpeg/MediaCodec

(4)Mux

FFmpeg/MediaMuxer

1.2 短视频应用的挑战

目前短视频应用都是要求对视频的实时录制,对视频帧率要求至少24FPS。此外大部分应用还包含视频滤镜,人脸识别,添加人物饰品等需求。按照最低24FPS的要求,每帧图像在各个流程的处理时间不超过1000/24 = 41.67ms,否则就会出现丢帧现象,造成视频卡顿。

(1)(4)视频采集和Mux阶段一般不会存在任何瓶颈,瓶颈在于视频帧(2)帧处理和(3)编码阶段,哪怕是异步进行也必须要求每个阶段的处理时间不能超过42ms。

2. Android硬编码

2.1 API Level限制

Android对视频进行硬编码需要用到MediaCodec, MediaMuxer一般都对API Level有要求。MediaCodec

API Level >= 16,其中个别非常重要的接口要求18以上,比如createInputSurface MediaMuxer

API Level >= 18 MediaRecorder

API Level >= 1,但是重要接口setInputSurface要求API Level >= 23。 不过目前API Level < 18的机型比较少了,哪怕是存在,机器的配置也实在太低了,就算是采用软编码方案,录制出来的视频效果极其糟糕。

2.2 适配性较差

由于硬编码和手机硬件平台相关性较大,目前发现在部分机型上存在不兼容现象,所以并不能完全抛弃软编码方案作为硬编码的补充。2.3 Pipeline

//TODO Hardware encoding on Android Platform3. Camera数据采集

主要流程如下:- 打开camera

- 设置texture

- 设置参数

- 开始采集

- 结束

//(1)打开Camera

cam = Camera.open();

//(2)设置texture

cam.setPreviewTexture(texID);

//(3)设置Camera参数

Camera.Parameters parameters = cam.getParameters();

cam.setParameters(parameters);

// (4)开始采集

cam.startPreview();

//(5)结束

cam.stopPreview();

cam.release();

3.1 打开Camera

参考Grafika代码: try {

if (Build.VERSION.SDK_INT > Build.VERSION_CODES.FROYO) {

int numberOfCameras = Camera.getNumberOfCameras();

Camera.CameraInfo cameraInfo = new Camera.CameraInfo();

for (int i = 0; i < numberOfCameras; i++) {

Camera.getCameraInfo(i, cameraInfo);

if (cameraInfo.facing == facing) {

mDefaultCameraID = i;

mFacing = facing;

}

}

}

stopPreview();

if (mCameraDevice != null)

mCameraDevice.release();

if (mDefaultCameraID >= 0) {

mCameraDevice = Camera.open(mDefaultCameraID);

} else {

mCameraDevice = Camera.open();

mFacing = Camera.CameraInfo.CAMERA_FACING_BACK; //default: back facing

}

} catch (Exception e) {

LogUtil.e(TAG, "Open Camera Failed!");

e.printStackTrace();

mCameraDevice = null;

return false;

}

3.2 Camera参数设置

比较重要的几个参数:setPreviewSize

setPictureSize

setFocusMode

setPreviewFrameRate

setPictureFormat 设置宽高参考如下代码:

/**

* Attempts to find a preview size that matches the provided width and height (which

* specify the dimensions of the encoded video). If it fails to find a match it just

* uses the default preview size for video.

*

* TODO: should do a best-fit match, e.g.

* https://github.com/commonsguy/cwac-camera/blob/master/camera/src/com/commonsware/cwac/camera/CameraUtils.java

*/

public static void choosePreviewSize(Camera.Parameters parms, int width, int height) {

// We should make sure that the requested MPEG size is less than the preferred

// size, and has the same aspect ratio.

Camera.Size ppsfv = parms.getPreferredPreviewSizeForVideo();

if (ppsfv != null) {

Log.d(TAG, "Camera preferred preview size for video is " +

ppsfv.width + "x" + ppsfv.height);

}

//for (Camera.Size size : parms.getSupportedPreviewSizes()) {

// Log.d(TAG, "supported: " + size.width + "x" + size.height);

//}

for (Camera.Size size : parms.getSupportedPreviewSizes()) {

if (size.width == width && size.height == height) {

parms.setPreviewSize(width, height);

return;

}

}

Log.w(TAG, "Unable to set preview size to " + width + "x" + height);

if (ppsfv != null) {

parms.setPreviewSize(ppsfv.width, ppsfv.height);

}

// else use whatever the default size is

}

设置图像格式 这个参数需要注意:

如果不需要对视频帧进行处理,可以把图像格式设置为YUV格式,可以直接给编码器进行编码,否则还需要进行格式转换。

mParams.setPreviewFormat(ImageFormat.YV12);

如果需要对视频帧添加滤镜等渲染操作,那么就必须把图像格式设置为RGB格式:

mParams.setPictureFormat(PixelFormat.JPEG);

其它参数参考: https://developer.android.com/reference/android/hardware/Camera.Parameters.html 完整代码如下:

public void initCamera(int previewRate) {

if (mCameraDevice == null) {

LogUtil.e(TAG, "initCamera: Camera is not opened!");

return;

}

mParams = mCameraDevice.getParameters();

List supportedPictureFormats = mParams.getSupportedPictureFormats();

for (int fmt : supportedPictureFormats) {

LogUtil.i(TAG, String.format("Picture Format: %x", fmt));

}

mParams.setPictureFormat(PixelFormat.JPEG);

List.Size> picSizes = mParams.getSupportedPictureSizes();

Camera.Size picSz = null;

Collections.sort(picSizes, comparatorBigger);

for (Camera.Size sz : picSizes) {

LogUtil.i(TAG, String.format("Supported picture size: %d x %d", sz.width, sz.height));

if (picSz == null || (sz.width >= mPictureWidth && sz.height >= mPictureHeight)) {

picSz = sz;

}

}

List.Size> prevSizes = mParams.getSupportedPreviewSizes();

Camera.Size prevSz = null;

Collections.sort(prevSizes, comparatorBigger);

for (Camera.Size sz : prevSizes) {

LogUtil.i(TAG, String.format("Supported preview size: %d x %d", sz.width, sz.height));

if (prevSz == null || (sz.width >= mPreferPreviewWidth && sz.height >=

mPreferPreviewHeight)) {

prevSz = sz;

}

}

List frameRates = mParams.getSupportedPreviewFrameRates();

int fpsMax = 0;

for (Integer n : frameRates) {

LogUtil.i(TAG, "Supported frame rate: " + n);

if (fpsMax < n) {

fpsMax = n;

}

}

mParams.setPreviewSize(prevSz.width, prevSz.height);

mParams.setPictureSize(picSz.width, picSz.height);

List focusModes = mParams.getSupportedFocusModes();

if (focusModes.contains(Camera.Parameters.FOCUS_MODE_CONTINUOUS_VIDEO)) {

mParams.setFocusMode(Camera.Parameters.FOCUS_MODE_CONTINUOUS_VIDEO);

}

previewRate = fpsMax;

mParams.setPreviewFrameRate(previewRate); //设置相机预览帧率

// mParams.setPreviewFpsRange(20, 60);

try {

mCameraDevice.setParameters(mParams);

} catch (Exception e) {

e.printStackTrace();

}

mParams = mCameraDevice.getParameters();

Camera.Size szPic = mParams.getPictureSize();

Camera.Size szPrev = mParams.getPreviewSize();

mPreviewWidth = szPrev.width;

mPreviewHeight = szPrev.height;

mPictureWidth = szPic.width;

mPictureHeight = szPic.height;

LogUtil.i(TAG, String.format("Camera Picture Size: %d x %d", szPic.width, szPic.height));

LogUtil.i(TAG, String.format("Camera Preview Size: %d x %d", szPrev.width, szPrev.height));

}

4. MediaCodec编码

采用MediaCodec对音频和视频编码类似。MediaCodec需要注意的有一下几点:

(1)MediaCodec本身包含缓冲区

(2)输入(Raw Data):可以是ByteBuffer或者Surface。

音频数据要求:16-bit signed integer in native byte order

视频最好是Surface,Surface在native层就是一个buffer,效率上更加高效。Google API上说:

Codecs operate on three kinds of data: compressed data, raw audio data and raw video data. All three kinds of data can be processed using ByteBuffers, but you should use a Surface for raw video data to improve codec performance. Surface uses native video buffers without mapping or copying them to ByteBuffers; thus, it is much more efficient. You normally cannot access the raw video data when using a Surface, but you can use the ImageReader class to access unsecured decoded (raw) video frames. This may still be more efficient than using ByteBuffers, as some native buffers may be mapped into direct ByteBuffers. When using ByteBuffer mode, you can access raw video frames using the Image class and getInput/OutputImage(int).主要流程如下:

(1)prepare

(2)encode

(3)drainEnd & flushBuffer

4.1 初始化 prepare

核心流程如下:format = new MediaFormat();

//set format

encoder = MediaCodec.createEncoderByType(mime);

encoder.config(format, ….);

encoder.start

mBufferInfo = new MediaCodec.BufferInfo();

MediaFormat format = MediaFormat.createVideoFormat(MIME_TYPE, config.mWidth, config

.mHeight);

// Set some properties. Failing to specify some of these can cause the MediaCodec

// configure() call to throw an unhelpful exception.

format.setInteger(MediaFormat.KEY_COLOR_FORMAT,

MediaCodecInfo.CodecCapabilities.COLOR_FormatSurface);

format.setInteger(MediaFormat.KEY_BIT_RATE, config.mVBitRate);

format.setInteger(MediaFormat.KEY_FRAME_RATE, config.mFPS);

format.setInteger(MediaFormat.KEY_I_FRAME_INTERVAL, IFRAME_INTERVAL);

if (VERBOSE) LogUtil.d(TAG, "format: " + format);

// Create a MediaCodec encoder, and configure it with our format. Get a Surface

// we can use for input and wrap it with a class that handles the EGL work.

try {

mEncoder = MediaCodec.createEncoderByType(MIME_TYPE);

} catch (Exception e) {

e.printStackTrace();

if (mOnPrepare != null) {

mOnPrepare.onPrepare(false);

}

return;

}

mEncoder.configure(format, null, null, MediaCodec.CONFIGURE_FLAG_ENCODE);

4.2 编码

核心流程如下:while(1):

{

(1) get status

encoderStatus = mEncoder.dequeueOutputBuffer(mBufferInfo, TIMEOUT_USEC);

encoderStatus: - wait for input: MediaCodec.INFO_TRY_AGAIN_LATER

- end

- error < 0

- ok (2) get encoded data

ByteBuffer encodedData = encoderOutputBuffers[encoderStatus];

(3) setup buffer info

mBufferInfo.presentationTimeUs = mTimestamp;

// adjust the ByteBuffer values to match BufferInfo (not needed?)

encodedData.position(mBufferInfo.offset);

encodedData.limit(mBufferInfo.offset + mBufferInfo.size);

(4) write to muxer

mMuxer.writeSampleData(mTrackIndex, encodedData, mBufferInfo);

(5) release buffer

mEncoder.releaseOutputBuffer(encoderStatus, false);

}

纤细代码如下:

protected void drain(boolean endOfStream) {

if (mWeakMuxer == null) {

LogUtil.w(TAG, "muxer is unexpectedly null");

return;

}

IMuxer muxer = mWeakMuxer.get();

if (VERBOSE) LogUtil.d(TAG, "drain(" + endOfStream + ")");

if (endOfStream) {

if (VERBOSE) LogUtil.d(TAG, "sending EOS to encoder");

mEncoder.signalEndOfInputStream();

}

ByteBuffer[] encoderOutputBuffers = mEncoder.getOutputBuffers();

while (true) {

int encoderStatus = mEncoder.dequeueOutputBuffer(mBufferInfo, TIMEOUT_USEC);

if (VERBOSE) LogUtil.d(TAG, "drain: status = " + encoderStatus);

if (encoderStatus == MediaCodec.INFO_TRY_AGAIN_LATER) {

// no output available yet

if (!endOfStream) {

break; // out of while

} else {

if (VERBOSE) LogUtil.d(TAG, "no output available, spinning to await EOS");

}

} else if (encoderStatus == MediaCodec.INFO_OUTPUT_BUFFERS_CHANGED) {

// not expected for an encoder

encoderOutputBuffers = mEncoder.getOutputBuffers();

} else if (encoderStatus == MediaCodec.INFO_OUTPUT_FORMAT_CHANGED) {

// should happen before receiving buffers, and should only happen once

// if (muxer.isStarted()) {

// throw new RuntimeException("format changed twice");

// }

MediaFormat newFormat = mEncoder.getOutputFormat();

LogUtil.d(TAG, "encoder output format changed: " + newFormat);

// now that we have the Magic Goodies, start the muxer

mTrackIndex = muxer.addTrack(IMuxer.TRACK_VIDEO_ID, newFormat);

if (!muxer.start()) {

synchronized ((muxer)) {

while (!muxer.isStarted() && !endOfStream)

try {

LogUtil.d(TAG, "drain: wait...");

muxer.wait(100);

} catch (final InterruptedException e) {

break;

}

}

}

} else if (encoderStatus < 0) {

LogUtil.w(TAG, "unexpected result from encoder.dequeueOutputBuffer: " +

encoderStatus);

// let's ignore it

} else {

ByteBuffer encodedData = encoderOutputBuffers[encoderStatus];

if (encodedData == null) {

throw new RuntimeException("encoderOutputBuffer " + encoderStatus +

" was null");

}

if ((mBufferInfo.flags & MediaCodec.BUFFER_FLAG_CODEC_CONFIG) != 0) {

// The codec config data was pulled out and fed to the muxer when we got

// the INFO_OUTPUT_FORMAT_CHANGED status. Ignore it.

if (VERBOSE) LogUtil.d(TAG, "ignoring BUFFER_FLAG_CODEC_CONFIG");

mBufferInfo.size = 0;

}

if (mBufferInfo.size != 0) {

if (!muxer.isStarted()) {

// throw new RuntimeException("muxer hasn't started");

return;

}

mBufferInfo.presentationTimeUs = mTimestamp;

// adjust the ByteBuffer values to match BufferInfo (not needed?)

encodedData.position(mBufferInfo.offset);

encodedData.limit(mBufferInfo.offset + mBufferInfo.size);

muxer.writeSampleData(mTrackIndex, encodedData, mBufferInfo);

if (VERBOSE) {

LogUtil.d(TAG, "sent " + mBufferInfo.size + " bytes to muxer, ts=" +

mBufferInfo.presentationTimeUs);

}

}

mEncoder.releaseOutputBuffer(encoderStatus, false);

if ((mBufferInfo.flags & MediaCodec.BUFFER_FLAG_END_OF_STREAM) != 0) {

if (!endOfStream) {

LogUtil.w(TAG, "reached end of stream unexpectedly");

} else {

if (VERBOSE) LogUtil.d(TAG, "end of stream reached");

}

break; // out of while

}

}//if encode status

}//while

}

4.3 drainEnd & flushBuffer

mEncoder.signalEndOfInputStream();

4.4 输入数据

对视频来说,直接传递Texture即可,调用MediaCodec编码需要在GLSurfaceView.onDrawFrame中调用。对音频来说需要调用:

protected void encode(final ByteBuffer buffer, final int length, final long

presentationTimeUs) {

if (!mRunning || mEncoder == null || !mCodecPrepared) {

LogUtil.w(TAG, "encode: Audio encode thread is not running yet.");

return;

}

final ByteBuffer[] inputBuffers = mEncoder.getInputBuffers(); //illegal state

// exception

while (mRunning) {

final int inputBufferIndex = mEncoder.dequeueInputBuffer(TIMEOUT_USEC);

if (inputBufferIndex >= 0) {

final ByteBuffer inputBuffer = inputBuffers[inputBufferIndex];

inputBuffer.clear();

if (buffer != null) {

inputBuffer.put(buffer);

}

// if (DEBUG) LogUtil.v(TAG, "encode:queueInputBuffer");

if (length <= 0) {

// send EOS

mIsEOS = true;

LogUtil.i(TAG, "send BUFFER_FLAG_END_OF_STREAM");

mEncoder.queueInputBuffer(inputBufferIndex, 0, 0,

presentationTimeUs, MediaCodec.BUFFER_FLAG_END_OF_STREAM);

break;

} else {

mEncoder.queueInputBuffer(inputBufferIndex, 0, length,

presentationTimeUs, 0);

}

break;

} else if (inputBufferIndex == MediaCodec.INFO_TRY_AGAIN_LATER) {

// wait for MediaCodec encoder is ready to encode

// nothing to do here because MediaCodec#dequeueInputBuffer(TIMEOUT_USEC)

// will wait for maximum TIMEOUT_USEC(10msec) on each call

LogUtil.d(TAG, "encode: wait for MediaCodec encoder is ready to encode");

}

}

}

5. MediaMuxer

(1) 初始化mMuxer = new MediaMuxer(mOutputPath, format);

(2)添加音频/视频流

/**

* assign encoder to muxer

*

* @param trackID

* @param format

* @return minus value indicate error

*/

public synchronized int addTrack(int trackID, final MediaFormat format) {

if (mIsStarted)

throw new IllegalStateException("muxer already started");

final int trackIndex = mMuxer.addTrack(format);

if (trackID == TRACK_VIDEO_ID) {

LogUtil.d(TAG, "addTrack: add video track = " + trackIndex);

mIsVideoAdded = true;

} else if (trackID == TRACK_AUDIO_ID) {

LogUtil.d(TAG, "addTrack: add audio track = " + trackIndex);

mIsAudioAdded = true;

}

return trackIndex;

}

(3)开始

/**

* request readyStart recording from encoder

*

* @return true when muxer is ready to write

*/

@Override

public synchronized boolean start() {

LogUtil.v(TAG, "readyStart:");

if ((mHasAudio == mIsAudioAdded)

&& (mHasVideo == mIsVideoAdded)) {

mMuxer.start();

mIsStarted = true;

if (mOnPrepared != null) {

mOnPrepared.onPrepare(true);

}

LogUtil.v(TAG, "MediaMuxer started:");

}

return mIsStarted;

}

(3)写入数据

/**

* write encoded data to muxer

*

* @param trackIndex

* @param byteBuf

* @param bufferInfo

*/

@Override

public synchronized void writeSampleData(final int trackIndex, final ByteBuffer byteBuf,

final MediaCodec.BufferInfo bufferInfo) {

if (mIsStarted)

mMuxer.writeSampleData(trackIndex, byteBuf, bufferInfo);

}

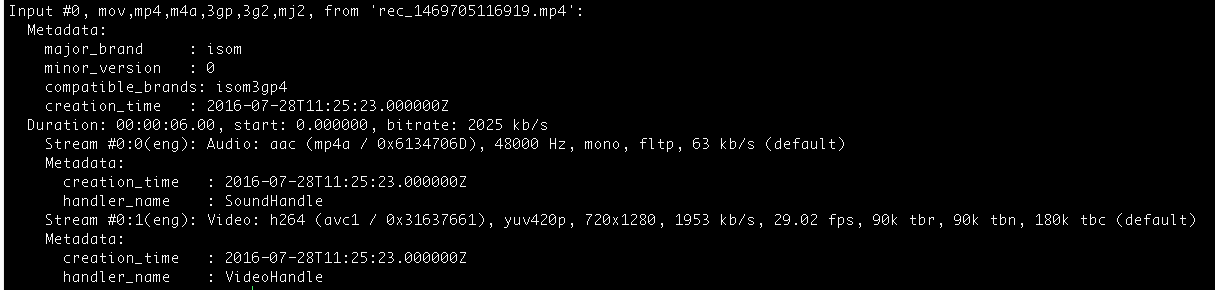

6. 硬编码软编码对比

ffmpeg编码设置为:av_opt_set(c->priv_data, "preset", "superfast", 0);

bitrate,fps等条件相同情况: (1)FPS

硬编码FPS=30,软编码FPS<17

(2)画面质量

目前来看二者画面质量差别不是很大,之后会用专业的视频质量分析工具验证下。 软编码:

硬编码:

硬编码:

7. 参考文献

[1] Grafika[2] Google API MediaCodec